Agenta vs qtrl.ai

Side-by-side comparison to help you choose the right tool.

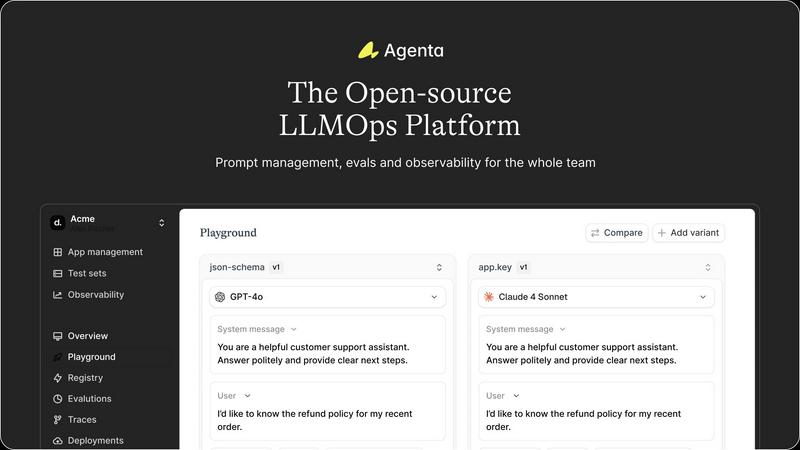

Agenta is an open-source platform that helps teams build and manage reliable AI apps together.

Last updated: March 1, 2026

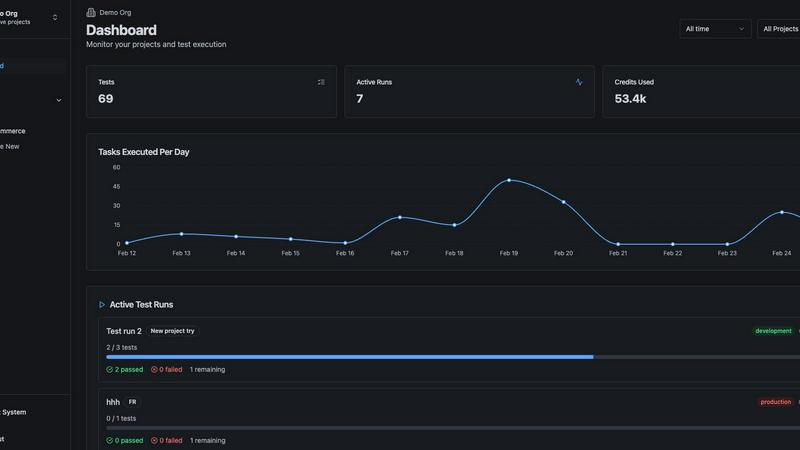

qtrl.ai

Scale your QA testing with AI agents while keeping full control and oversight.

Last updated: March 4, 2026

Visual Comparison

Agenta

qtrl.ai

Feature Comparison

Agenta

Unified Playground

Agenta provides a unified playground where you can safely experiment with different prompts and models side-by-side in one central interface. This eliminates the need to juggle multiple tools or windows. Found an error in production? You can easily save it to a test set and use it directly in the playground to debug and iterate, making prompt engineering a collaborative and data-driven process.

Automated Evaluation

Replace guesswork with evidence using Agenta's systematic evaluation framework. You can create automated tests to validate every change to your LLM application. The platform supports any evaluator you need, including LLM-as-a-judge, built-in metrics, or your own custom code. Crucially, you can evaluate the full trace of an agent's reasoning, not just the final output, to pinpoint exactly where things go right or wrong.

Comprehensive Observability

Gain full visibility into your live AI applications with detailed tracing for every request. This allows you to quickly debug systems and find the exact failure points when things go wrong. You can annotate traces with your team or gather feedback from users directly within the platform. Any trace can be turned into a test case with a single click, creating a powerful feedback loop.

Team Collaboration Hub

Agenta breaks down silos by providing a shared workspace for your entire team. It offers a safe, no-code UI for domain experts to edit and experiment with prompts. Product managers and experts can run evaluations and compare experiments directly from the interface, while developers work via the full-featured API. This parity between UI and API workflows brings everyone into one cohesive development process.

qtrl.ai

Enterprise-Grade Test Management

qtrl provides a robust, centralized foundation for all your QA activities. You can create, organize, and manage test cases, plan comprehensive test runs, and trace everything back to specific requirements. This ensures full visibility and creates detailed audit trails, which is essential for teams that need to meet compliance standards. It supports both manual testing workflows and automated processes, all in one organized place.

Progressive AI Automation

This feature allows you to adopt AI at your own pace. You can start by writing simple, high-level test instructions in plain English, and qtrl will execute them precisely. As you gain confidence, you can let qtrl's AI suggest and generate automated tests for you. Every AI-generated step is fully reviewable and editable, so you maintain complete oversight and control throughout your automation journey.

Autonomous QA Agents

qtrl's AI-powered agents act like intelligent team members that can execute testing tasks. You can instruct them to run tests on-demand or set them to run continuously. They operate across multiple browsers and real environments—not simulations—following the rules and permissions you set. This allows you to scale your test execution massively without a proportional increase in manual effort.

Adaptive Memory & Governance

The platform builds a living knowledge base of your application by learning from every test run, exploration, and identified issue. This "Adaptive Memory" makes test generation smarter and more context-aware over time. Crucially, all this happens within a governance-first framework. You have full visibility into agent actions, can set permission levels, and your secrets are kept encrypted and secure, never exposed to the AI.

Use Cases

Agenta

Streamlining Prompt Engineering Workflows

Teams can centralize their prompt development, moving away from scattered documents in Slack, Google Sheets, and emails. With version history and side-by-side comparison, developers and domain experts can collaboratively iterate on prompts, test them with real data, and track all changes systematically, leading to more reliable and performant prompts.

Running Rigorous LLM Application Tests

Before deploying any change, teams can establish a rigorous evaluation process. They can build test sets from production errors, use automated evaluators (like LLM judges) to score outputs, and integrate human feedback from experts. This ensures every update is backed by data, preventing performance regressions and "vibe testing" before going to production.

Debugging Complex AI Agents in Production

When a multi-step AI agent behaves unexpectedly in a live environment, Agenta's observability tools shine. Engineers can trace every step of the agent's reasoning chain, annotate where failures occurred, and immediately use those problematic traces to create new test cases. This turns painful guesswork into a structured debugging workflow.

Enabling Cross-Functional AI Development

Agenta empowers non-technical team members to contribute directly to the AI development lifecycle. Product managers can define evaluation criteria and run tests, while subject matter experts can tweak prompts in a safe UI environment without writing code. This collaboration accelerates iteration and ensures the final product aligns with business and domain expertise.

qtrl.ai

Scaling Beyond Manual Testing

For QA teams overwhelmed by repetitive manual checks, qtrl offers a smooth path forward. Teams can begin by structuring their existing manual test cases in the platform. Then, they can progressively automate the most tedious and high-value tests using AI, freeing up human testers to focus on more complex, exploratory work and significantly increasing test coverage and speed.

Modernizing Legacy QA Workflows

Companies stuck with outdated, siloed, or script-heavy automation frameworks can use qtrl to consolidate and modernize. The platform brings test management and execution into a single, user-friendly interface. Teams can gradually replace brittle scripts with AI-generated tests that are easier to maintain, reducing the long-term cost and complexity of test automation.

Ensuring Governance in Enterprise QA

Enterprises in regulated industries (like finance or healthcare) that require strict audit trails and compliance can trust qtrl. The platform provides full traceability from requirements to test execution, detailed logs of all AI agent activities, and enterprise-grade security. This allows large organizations to harness the power of AI for QA without sacrificing the control and documentation they need.

Supporting Product-Led Engineering Teams

Fast-moving product teams that need to ensure quality with every release can integrate qtrl into their CI/CD pipelines. Developers and QA can collaborate in one platform to create tests, get continuous quality feedback, and run automated checks across multiple environments. This helps ship features faster with confidence, maintaining a high bar for quality.

Overview

About Agenta

Agenta is your friendly, open-source platform designed to help teams build and ship reliable AI applications powered by large language models (LLMs). If you've ever felt frustrated by the unpredictable nature of LLMs, with prompts scattered everywhere and debugging feeling like guesswork, Agenta is here to help. It's built for the whole team-developers, product managers, and subject matter experts-to collaborate seamlessly. The platform acts as your single source of truth, centralizing the entire LLM development workflow. You can experiment with different prompts and models, run automated evaluations to replace gut feelings with hard evidence, and observe your live applications to quickly pinpoint issues. By bringing everyone together and providing the right tools, Agenta transforms chaotic, siloed processes into a structured, efficient practice known as LLMOps, helping you move from experimentation to production with confidence. Whether you're a developer tired of manual testing or a product manager needing visibility into AI performance, Agenta provides the integrated infrastructure for prompt management, evaluation, and observability you need to succeed.

About qtrl.ai

qtrl.ai is a modern, intelligent QA platform designed to help software teams scale their quality assurance efforts the smart way. It's built for teams who are tired of choosing between slow, manual testing and complex, brittle automation. qtrl provides a single, centralized hub where you can organize all your test cases, plan detailed test runs, and see exactly how your testing maps back to requirements. This gives engineering leads and QA managers crystal-clear visibility into what's been tested, what's passing, and where potential risks might be hiding.

What truly sets qtrl apart is its thoughtful, progressive approach to AI. Instead of forcing you into a risky "black-box" AI system from day one, qtrl lets you start simple with solid test management. When you're ready, you can gradually introduce powerful, trustworthy AI automation. The platform's autonomous agents can generate real browser tests from plain English instructions, keep those tests updated as your application changes, and run them at scale. This makes qtrl perfect for product-led engineering teams, QA groups moving beyond manual processes, companies modernizing old workflows, and any enterprise that needs strict compliance and audit trails. Ultimately, qtrl bridges the gap, offering a trusted, controlled path to faster and more intelligent quality assurance.

Frequently Asked Questions

Agenta FAQ

Is Agenta really open-source?

Yes, Agenta is fully open-source. You can dive into the code on GitHub, contribute to the project, and self-host the platform. This gives you full control over your data and infrastructure while benefiting from a tool built and vetted by a community of hundreds of AI builders.

What AI frameworks does Agenta work with?

Agenta is designed to be flexible and model-agnostic. It seamlessly integrates with popular frameworks like LangChain and LlamaIndex, and works with models from any provider, including OpenAI, Anthropic, and open-source models. This prevents vendor lock-in and lets you use the best model for each task.

How does Agenta help with collaboration?

Agenta provides a single platform that serves both technical and non-technical team members. It offers a no-code UI for experts to edit prompts and run evaluations, while providing a full API for developers. This shared "source of truth" for prompts, tests, and traces ensures everyone is aligned and can contribute effectively.

Can I use Agenta to monitor live applications?

Absolutely. Agenta's observability features allow you to trace every request to your live LLM application. You can monitor performance, detect regressions with online evaluations, and gather user feedback on specific outputs. This continuous oversight is crucial for maintaining and improving reliable AI systems in production.

qtrl.ai FAQ

How does qtrl.ai's AI work? Is it a "black box"?

No, qtrl is designed to be transparent and trustworthy. Its AI does not make hidden decisions. When it generates test steps from your instructions, you can see, review, and edit every single action it proposes. You have full visibility into what the autonomous agents are doing, and they operate only within the rules and permissions you define, ensuring you are always in control.

Can I use qtrl if my team only does manual testing right now?

Absolutely! qtrl is built for progression. You can start by using it as a powerful test management tool to organize your manual test cases, plans, and runs. When you feel ready to explore automation, the AI features are right there for you to start using gradually. There's no need to change your entire process overnight.

What kind of tests can the Autonomous QA Agents run?

The agents run real, end-to-end tests in actual browser environments (like Chrome or Firefox). They interact with your web application just like a human would—clicking buttons, filling forms, and validating content. They are not simulations, so you get accurate results that reflect the true user experience across different browsers and test environments.

How does qtrl handle security and sensitive data?

Security is a top priority. qtrl uses enterprise-ready security practices. Sensitive data like passwords and API keys are stored as encrypted environment variables and secrets. Crucially, these secrets are never exposed to or accessible by the AI agents during test execution, keeping your sensitive information completely safe.

Alternatives

Agenta Alternatives

Agenta is an open-source platform in the LLMOps category, designed to help teams build and manage reliable AI applications. It centralizes the workflow for experimenting with prompts, evaluating models, and observing live apps, making collaboration between developers and non-technical team members much smoother. People often explore alternatives to Agenta for various reasons. They might need a different pricing model, require specific integrations with their existing tech stack, or look for a tool that offers a different set of features or a different user experience. It's a natural part of finding the perfect fit for a team's unique workflow and budget. When choosing an alternative, focus on what matters most for your project. Consider the platform's core capabilities for experimentation and evaluation, how well it supports team collaboration, and its approach to security and data privacy. The goal is to find a solution that brings structure to your AI development process, helping your team move from ideas to production with confidence.

qtrl.ai Alternatives

qtrl.ai is a modern QA platform in the automation and dev tools category. It helps software teams scale their testing efforts by combining structured test management with intelligent AI agents. This approach allows teams to automate tests while maintaining full control and governance over the entire quality assurance process. Users often explore alternatives for various reasons. These can include budget constraints, the need for different feature sets, or specific platform requirements like integration with existing tools. Some teams might be looking for a simpler entry point or a solution tailored to a very niche testing methodology. When evaluating different options, it's wise to consider a few key areas. Look at how the platform balances automation power with ease of use and control. Check if it can grow with your team's needs and whether it provides the visibility and reporting that your managers require. The right fit should align with your team's current workflow while offering a clear path to more advanced testing.